The comparison of kappa and PABAK with changes of the prevalence of the... | Download Scientific Diagram

Agree or Disagree? A Demonstration of An Alternative Statistic to Cohen's Kappa for Measuring the Extent and Reliability of Ag

Success and time implications of SpO2 measurement through pulse oximetry among hospitalised children in rural Bangladesh: Variability by various device-, provider- and patient-related factors — JOGH

Agree or Disagree? A Demonstration of An Alternative Statistic to Cohen's Kappa for Measuring the Extent and Reliability of Ag

Agree or Disagree? A Demonstration of An Alternative Statistic to Cohen's Kappa for Measuring the Extent and Reliability of Ag

Kappa values and Prevalence-adjusted Bias-adjusted kappa values for... | Download Scientific Diagram

Kappa values and Prevalence-adjusted Bias-adjusted kappa values for... | Download Scientific Diagram

Introducing pulse oximetry for outpatient management of childhood pneumonia: An implementation research adopting a district implementation model in selected rural facilities in Bangladesh - eClinicalMedicine

What does PABAK mean? - Definition of PABAK - PABAK stands for Prevalence-Adjusted Bias-Adjusted Kappa. By AcronymsAndSlang.com

Agree or Disagree? A Demonstration of An Alternative Statistic to Cohen's Kappa for Measuring the Extent and Reliability of Ag

The disagreeable behaviour of the kappa statistic - Flight - 2015 - Pharmaceutical Statistics - Wiley Online Library

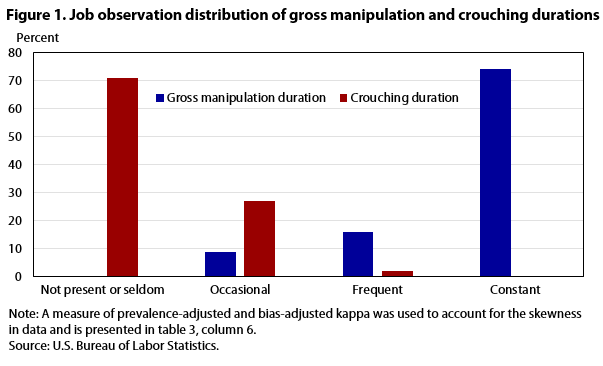

Occupational Requirements Survey: results from a job observation pilot test : Monthly Labor Review: U.S. Bureau of Labor Statistics

Kappa values and Prevalence-adjusted Bias-adjusted kappa values for... | Download Scientific Diagram

Validity of Actigraphy in Measurement of Sleep in Young Adults With Type 1 Diabetes | Journal of Clinical Sleep Medicine

Kappa values and Prevalence-adjusted Bias-adjusted kappa values for... | Download Scientific Diagram

Inter-observer variation can be measured in any situation in which two or more independent observers are evaluating the same thing Kappa is intended to. - ppt download

The disagreeable behaviour of the kappa statistic - Flight - 2015 - Pharmaceutical Statistics - Wiley Online Library